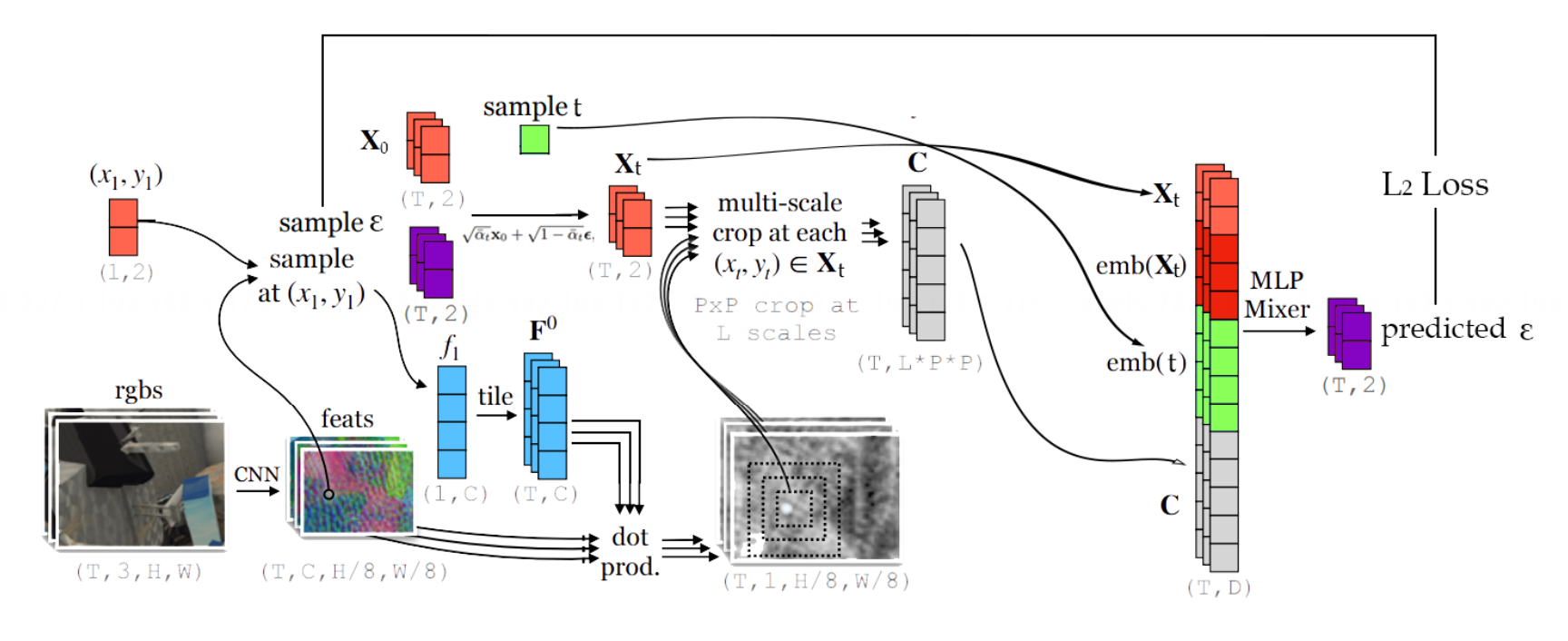

The state-of-the-art for pixel tracking, both in optical flow and in multi-frame motion estimation, is driven by algorithms which learn an iterative refinement procedure, where the model begins with a noisy estimate of the target's trajectory, and the model iteratively updates this estimate with deltas, until the estimated trajectory matches the ground truth. Parallel to this, research in generative models has developed denoising diffusion procedures, where a generated sample is initialized with random noise, and the model iteratively updates this sample by applying deltas, until the sample matches a real data point. In this project we first of all make this connection across areas concrete. Second, we aim to leverage the recent progress in scaling up denoising generative models to implement (and scale up) a denoising-based pixel tracker.